I’m on the Digital and Technology Fast Stream, and currently coming to the end of my first placement as a performance analyst in the Home Office.

My role here has been to help develop the service performance function, looking at ways to measure the success of a service. We need to justify our services through tangible metrics, otherwise we end up wasting time, money and resources.

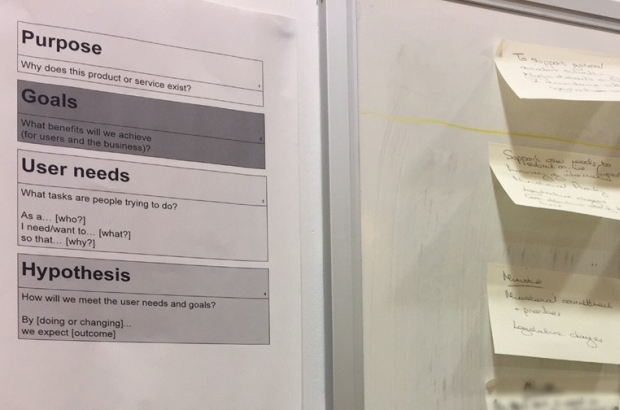

My task was a bit of a blank canvas project. Luckily, the team at GDS published this blog post about their experiences, including a template for a performance workshop.

From running similar workshops, it helped me to understand what can be done to improve performance analysis in the department.

Here are three key lessons I’d like to pass on about measuring performance.

Early and often

It is important to involve your performance analyst from the start of a project and run performance framework workshops early in the process. Ideally just before your alpha assessment. However, if you find that the session at alpha isn’t sufficient, repeat the process at beta, when you will have more research to work with.

If you find that when you go live, the measures you decided at alpha and beta are not quite right, then repeat the workshop and keep repeating until you find performance indicators to measure what you want to know about a service.

Google Analytics is not the be all and end all

Don’t just use your performance analyst as a Google Analytics (GA) puppet.

GA reports are quick to set up but can only measure web goals, so use the analyst to help you develop metrics across the whole service that can’t be tracked on GA.

Ask yourself: are the goals you set up meaningful and useful? Will they help improve the service as a whole, or are there more performance measures that you need to examine? What does success look like?

The 4 digital service standard measures aren’t the only important ones

Teams often end up relying on the 4 performance measures listed in the service manual. They should be coming up with more, particularly in areas that GA cannot capture.

For example, does the business unit have internal performance measures or service level agreements that the digital service can make an improvement on? If they do, try to put in processes to measure these before the service goes live.

See it as an opportunity to test hypotheses about user behaviour or service improvements, and this will help shape future iterations of the product.

What’s next?

Our next steps will be to encourage further engagement with the performance function by trialling the performance workshops with more teams. We'll also work closely with service managers and their teams to create better measures, and encourage them to think beyond the 4 digital service standard performance measures.

If you're a product owner or delivery manager and would like to organise a performance workshop, please get in contact with the Service Optimisation team.

Leave a comment