Around 21% of the UK adult population lacks basic digital skills. Often, people who are socially excluded also face digital exclusion, such as people in social housing, those on lower wages or who are unemployed, older people or those with a disability.

Through the Equality Act and the UK Digital Strategy, we have a responsibility to ensure our services do not discriminate and that those who want to use digital channels are supported.

The digital inclusion scale is an important tool in a researcher’s toolkit to ensure they research with people who have different levels of digital skill.

However, as technology and attitudes towards digital services have developed, is the current digital inclusion scale still fit for purpose?

Home Office designers and researchers came together for an afternoon to understand how the scale is being used in delivery teams, its relevance to our users, and how we could iterate the scale to better visualise our research.

What we found out

Speaking to a broad range of users is crucial for designing an effective service and meeting the Digital Service Standard. For us, the scale did attempt to account for a full spectrum of users’ digital skill levels and allowed us to prove to stakeholders that our research was representative.

Having a standardised way of showing our participants’ digital inclusion across services was also cited as being an important reason to use the scale, even though they’re service specific. So, a participant might have felt more confident renewing their driving licence online as opposed to completing a residency application.

It also became clear that the Home Office’s approach to digital inclusion has evolved. The increased use of mobile devices makes some researchers feel uncertain about accurately plotting a participant – skill on desktops can differ from skill on mobile devices.

We discussed how people might be able to move from one category to another as they became more confident using digital services. Some said that this movement was implied in the use of a scale, but they were confused that the combination of behaviours and attitudes in the category names made this movement complicated.

Some found the categories confusing, in either how they sometimes overlap with others or in their rigidity, and concerns were raised that these titles could create a negative perception of users on the lower end of the scale.

It was also said that the categories could do more to unpack socio-economic factors, for example the cost of accessing the internet and how people find workarounds to tackle lack of digital skill or access.

Digital inclusion mapping for the future

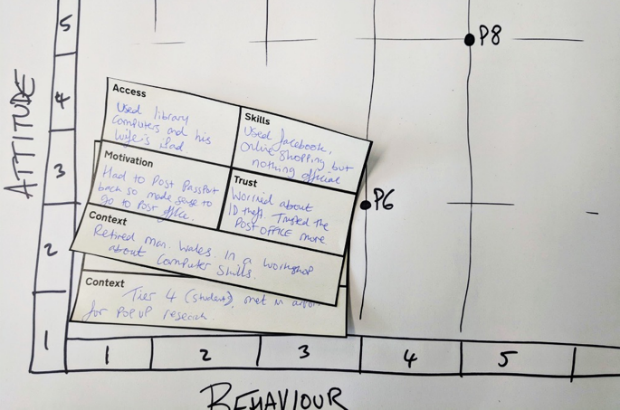

To see how mapping of digital inclusion could be improved, we filled in cards, using the barriers highlighted in the GOV.UK Digital Skills and Inclusion blog, with user stories from our research.

Applying real data allowed us to think about the common barriers that stood out for us across our cards, so we could then map out new visualisation methods and categories for capturing digital inclusion data.

We’re still at a first draft with our ideas, but we could collate some overarching findings from our workshop. These are:

- we need to map participants based on a combination of access, skills, motivation and trust

- the context of the service is important

- the device the participant is using plays a part

- any mapping activity should help determine if the user needs assisted digital support

We'd love to hear your thoughts or findings on the scale. Email assisteddigital@digital.homeoffice.gov.uk.

1 comment

Comment by Jane Devine posted on

Great blog post. I have recently been researching with people with english as a second language and have often found that it is language, rather than digital skills, that is a barrier to them using our services. Invariably they rely on family and friends and also community groups for help.