At the Home Office, usability testing is our go-to method for finding out how well a service will work for people. With expert users we need to understand how well the service will work in the context of their role and wider business processes.

It’s tempting to make these tests a conversation, but this can affect how our users behave and make our results less accurate.

Why asking users to think aloud can make your research less real

In usability tests, we often ask our users to ‘think aloud’ so we can hear what they’re thinking as well as see what they’re doing.

This 'think aloud’ method usually involves extremely limited interaction between the user and the researcher. But often it can be tempting to talk with participants while they’re using the service.

Interacting with the user while they complete tasks makes the usability test less realistic. In the real workplace users won’t have someone asking them questions about the service as they use it.

Studies have shown that taking an ‘interactive’ approach effects user behaviour, meaning your findings will be less robust and representative.

How cooperative usability testing can help

In 2005, Erik Frøkjær and Kasper Hornbæk, two academics at the University of Copenhagen, proposed a solution to this problem called ‘cooperative usability testing'.

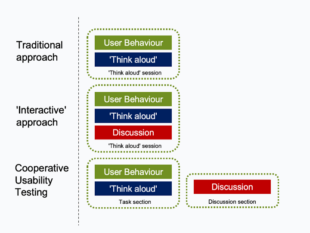

The idea is simple. Instead of banning discussion between the researcher and the user, they suggest splitting the usability test into two sections:

- Task – the user completes the task on their own and thinks aloud, with minimal interventions from the researcher.

- Discussion - the researcher and user step through the journey page-by-page and join forces to discuss the usability problems the user encountered while they completed the task.

Diagram showing the difference between cooperative usability testing and other approaches.

While the ‘task’ section has the robustness of traditional usability testing, the ‘discussion’ section creates a partnership between the user and researcher.

Frøkjær and Hornbæk found that cooperative usability testing works particularly well with expert users. The more relaxed discussion is a fantastic way of exploiting the user’s specialist domain knowledge and understanding how useful the service is for people in their role.

How to get the most from the method

At the Home Office, we’ve experimented with cooperative usability testing while working with expert users of immigration-related systems.

Here are some tips based on our experiences.

Choose the right project

Not all projects will be appropriate for cooperative usability testing. We’ve found it works best with short tasks as it relies on the user’s memory of that task.

You can use cooperative usability testing with any users, but it works best with expert users. Traditional usability testing may be more appropriate for public-facing services.

Make time for the discussion section

Using cooperative usability testing meant we had to extend the overall session time. When users are talking through their actions retrospectively there can be potential for repetition.

Good moderation of the discussion section can help focus the conversation on newer areas and shorten the overall session.

Take note taking seriously

As we know, user research is a team sport. With cooperative usability testing we recommend getting the team to note down interesting things that happen during the task section so that you can talk about them in the discussion section.

Ideally, appoint a dedicated note taker who understands the service and the research objectives. We also built regular note taker ‘check ins’ into the discussion section to ensure we were covering all the key issues that emerged on each page.

Be prepared to step into a different role

The shift between the task and discussion sections means researchers need to transition from being a mostly silent observer to playing a much more active role. The user needs to transition from just using the service to providing more reflective commentary.

It can be useful to brief the user at the start of the session about their role. You could say something like this:

First, I’m going to ask you to complete the task on your own. I’ll be here if you get really stuck, but mostly I’m just going to watch and listen.

In our experience, even with expert users, not all participants feel comfortable engaging with the discussion section, especially if they don’t feel they have the right skills or knowledge. Introducing it by saying something like this can help:

I’d now like to talk through how you found using the service in a bit more detail. You’re the expert here, so I’m really keen to learn from you about how well the service works for you.

Distinguish between the different kinds of data you’re collecting

Cooperative usability testing produces distinct kinds of data. The task section produces ‘hard’ data about user behaviour and what people think as they use the service. On the other hand, the discussion section produces ‘softer’ data that is vulnerable to several problems:

- users might misremember what happened in the task or try to rationalise their earlier behaviour

- you risk biasing the data with your existing views as you steer the conversation with your questions

- user opinions are just opinions, and may not reflect actual usability problems or user needs

We recommend you distinguish between the data from the two sessions in your analysis, and only base your findings on things evidenced in the more rigorous task section.

Despite these issues, we’ve found the data in the discussion section extremely useful, especially in providing context on expert user behaviours and revealing their specific needs.

Want to make an impact?

User-centred design at the Home Office is about designing our products and services in collaboration with the people who will use them. We work on some of the most challenging and important government services. Our work helps to keep people safe and the country secure.

You can find out more about user-centred design roles at our Home Office Careers website.

Leave a comment