Being clear about the desired outcomes of a service or of stages within a service is a useful way to think about why something exists or why it happens, without slipping into talking about the way in which it currently happens.

It’s easy to confuse outcomes with something else, such as a goal, a change or an output or deliverable of a project. So we define outcomes as the end result of a policy, a service, or part of a service. It’s the actual thing that you want to make happen.

| Outcomes

|

Ways of achieving that outcome (but not the only way) |

| Organisation gets the right data to make a decision about eligibility

A user knows what they can and can’t apply for |

A register

An API An online form

A check before you start pattern and new content on GOV.UK |

The output of a project might be ‘We made an application form work online,’ or ‘We have some new content on GOV.UK.’ A goal might be ‘To reduce the volume of inaccurate applications,’ or ‘To speed up decision making.’ These are different to the desired outcomes for a service or stage.

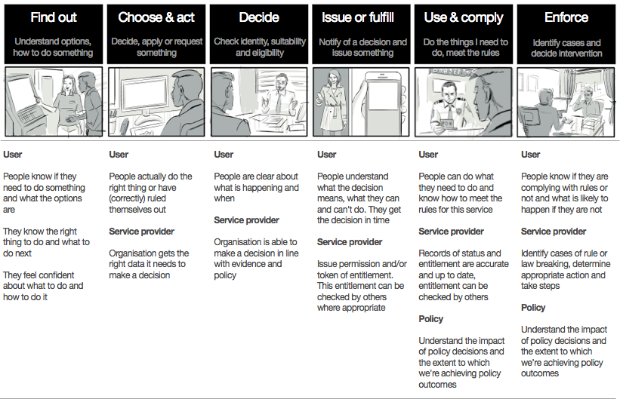

Outcomes for a stage in a service

Examples of good outcomes are ‘People know exactly what to do or what not to do,’ or ‘An organisation has the right data it needs to make a decision.’

Achieving these outcomes should be the result of hard work and experimentation to learn what’s the best (fastest, cheapest, simplest) way to do it. The best approach may change over time – but the desired outcome is likely to stay fairly constant. This is what makes them worth identifying.

The outcomes for users shown here are essentially written as core user needs that have been met – ie, the result of what happens if this service or stage performs well.

Similarly, the outcomes for service providers (eg, government) are written as the result of what happens if this service or stage is run in the most efficient and effective way possible.

In many ways, these outcomes read like common sense. But by articulating the desired outcomes and making them visible to everyone, you have the basis against which to compare all the possible ways of doing something – whether latest technology, by reusing a common platform, by doing something manually or by automating it.

Outcomes and managing work

The desired outcome for a whole service should reflect policy and strategic intent.

Large end-to-end services can have dozens of teams working to improve various transactions or to build new ways of doing things.

A common problem in any large organisation is that it’s hard to keep track of whether the result of all of the work adds up to a cohesive and significant improvement.

Each team or project may have its own business case, its own articulated and anticipated benefits, even its own strategy. And yet really, it’s all just 1 part of the same service that’s meant to cohesively contribute towards desired outcomes. Decision makers may be asked to sign off investment on a case by case basis, without always being aware of the wider service context.

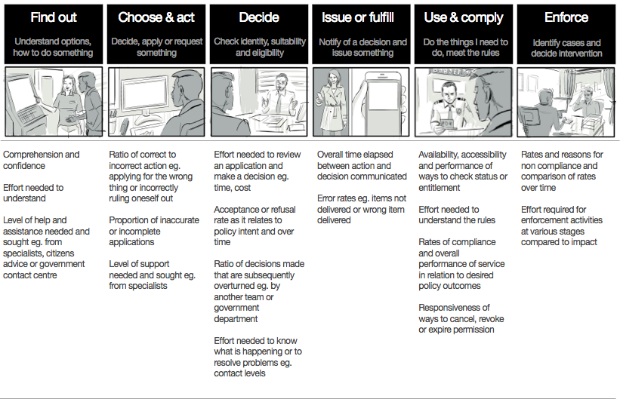

Measuring against outcomes

One way to tackle a lack of cohesion is to clearly articulate how you would measure the desired outcomes at each stage of a whole service. Once you have these, you can compare options to see which would contribute more towards these outcomes.

When a decision maker then reviews all the work that’s taking place, they have a solid basis for being able to steer and direct work. Or to see what's missing. Or to be able to challenge the direction of a project if the team is working in complete isolation from either outcomes or measures.

When individual teams come together to work on a project, they’ll have a way to understand the wider context for that work and what it’s trying to achieve. And yet it still leaves them with enough autonomy and room to experiment around which of multiple options will best deliver those outcomes.

It also helps them to see which other teams they need to be working more closely with to achieve shared outcomes. We’re experimenting now with ways teams frame the work they’re doing and measure it, so that it’s all relatable to the wider context.

Bridging the gap between services and IT portfolio management

Framing work in terms of end-to-end services doesn’t particularly help with understanding common capabilities, products or platforms that are reusable across services. It’s not in conflict either, there are other ways to look at those things. The intention is not to oversimplify what appears to be a very difficult and complex activity – effectively managing, reviewing and controlling large portfolios of work. That would be naive.

But we do need practical ways to start to bridge the gap between the ambition to orient government (and any large organisation) around end-to-end services that meet user needs, with the realities on the ground of traditional portfolio and delivery management approaches.

Outcomes – and measuring against them – are a way to set out what we want to achieve without dictating or ‘arm’s-length designing’ how it’s done. They transcend organisational boundaries. And so shared outcomes can effectively align teams across portfolios, organisations or departments.

When to use outcomes

Outcomes and their measures can be a useful lens to help consider:

- all the work that’s happening across an end-to-end service

- how to frame new work before it starts

- how to review what’s being delivered within a traditional delivery portfolio for what’s missing, needs aligning, or needs to be stopped

- how to write business cases or review spend control decisions